Unlocking the Value of AI for Your Business Series: Ep 3 - Explainable AI Making AI Transparent, Trustworthy, and Understandable

- Branden Millward

- Feb 18

- 4 min read

Why Explainable AI Matters Today

Explainable AI (XAI) is increasingly viewed as essential rather than optional, especially as AI systems become more complex and influential. As it’s no use having the most advanced AI in the world if it’s never used due to a lack of trust.

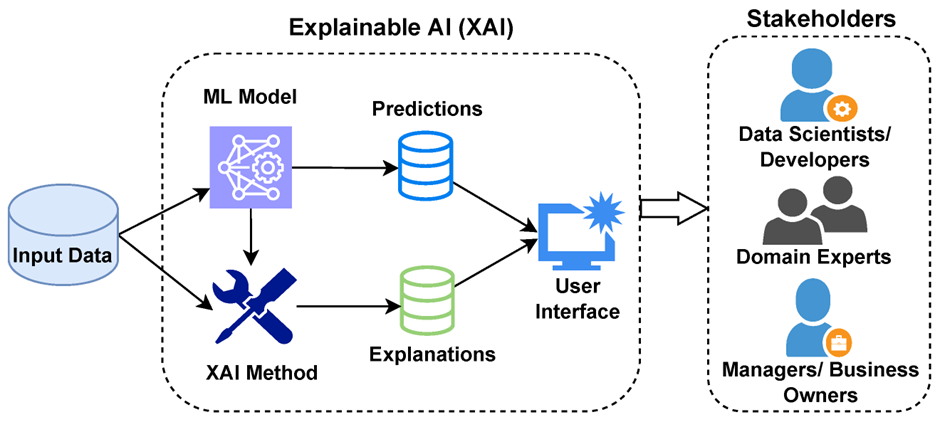

Understanding Explainable AI: What It Really Means

As models and agents have become more and more complex, they are utilising Deep Learning techniques, which are commonly referred to as “Black Boxes”, referring to the fact that people are unaware of what is happening within the model or the belief that the decisions are hidden and you can’t see what it’s doing. Explainable AI provides Transparency, Trust, Accountability and Visibility for safety and ethics purposes.

Explainable AI techniques have 3 classifying characteristics with 2 options each:

These 6 categorists of Explainable AI techniques are:

Application Stage

Ante-hoc: The model is explainable by design, these are generally interpretable models, such as regression models that have equations to explain decisions.

Post-hoc: The model needs an explainability/ interpretability technique applied afterwards.

Model Dependency

Are the XAI techniques model agnostic or specific? Can they be used with any type of model, or are they restricted to types like Neural Networks or Decision Trees?

Interpretability scope

These are split into:

Global Explainability

Explains how the entire model works overall. Example: “These features are the most important across all predictions.”

Local Explainability

Explains why the model made one specific decision. Example: “This student was predicted to be at risk because of decreased attendance.”

There are a few commonly used XAI techniques that produce explainable outputs, and how they fit within these classifications.

SHAP and LIME most common

SHAP (SHapley Additive exPlanations)

Shows how each feature contributes to a prediction. An example below shows how higher terms and interest rates have a negative impact on the likelihood of repaying a loan.

LIME (Local Interpretable Model-Agnostic Explanations)

Creates understandable explanations for individual decisions. However, LIME will not look at the model as a whole but instead look at the individual case basis to show the likelihood of a specific person repaying a loan and the main features that impacted that decision.

These tools can also be combined to highlight how a specific customer compares to the group as a whole.

These methods generally work by having an “explainer model” that looks at the features that went into the main model, and its output to predict the reasons.

Why is this Essential

For AI to continue, the ethics of the use of AI have made XAI more essential than ever. There are more and more studies that highlight that user trust is fundamentally linked to understanding how AI systems reach their decisions. So businesses are increasingly adopting XAI approaches not just for technical transparency, but to meet growing regulatory requirements around AI accountability and fairness. The use of these techniques allows businesses to utilise and benefit from more complex models while still being able to justify and explain decision making and highlight to customers or consumers why the model generated the output that it did without giving away competitive advantages. Using the example above, could show: here are the top 10 things to improve to be accepted for a new loan in the future. Improving trust and transparency in the solution for both users and customers.

Challenges and Limitations of Explainable AI

While Explainable and interpretable AI can go a long way to building and developing trust in systems and decisions the models themselves do still make mistakes just like we all do. Where a decision may be appealed or a classification is borderline it is important to keep “humans in the loop”, making people in the business responsible for the actions and decisions made using AI technology making the creators have interest in understanding how and why the model has made a decision so they can ensure that it is continuing to improve as we all should hope to.

How Businesses Can Start Implementing Explainable AI

Leading guidance stresses that XAI should not be an afterthought. It must be integrated from

design → training → deployment.

Organisations should:

Include explainability criteria early in model selection

Build pipelines that automatically generate and store explanations

Validate models using interpretability metrics alongside accuracy metrics

Doing so reduces the “black box” risk and improves compliance readiness.

What’s Next: The Future of Explainable AI

The future of XAI is that from a nice to have to a must have for all AI usage. The accuracy vs interpretability argument is coming to an end with businesses demanding more from AI solutions, as AI without interpretability can cause ethical, legal, and financial harm.

While regulations currently don’t explicitly call out the need for explainable AI, it pays to focus on transparency and ethics as loose terms. While the scramble to catch up with developments in this space is now a when rather than an if, that Explainable AI becomes a mandatory regulatory requirement for using AI.

Comments