Unlocking the Value of AI for Your Business Series: Ep 5 - Why AI Governance is an enabler, not a blocker

- Branden Millward

- Mar 2

- 5 min read

I hope you have enjoyed this series so far. I wanted to summarise. Now that you have an idea of how to implement and utilise AI, it is important to understand how to use it safely and with appropriate governance.

Why AI Governance Matters Now

AI Governance is an essential part of using AI successfully to ensure that your AI systems are safe, ethical, and reliable, and to limit risks such as bias, potential discrimination, and privacy breaches, while building trust with your users.

What AI Governance Actually Is

So what is AI Governance? It’s a framework that lays out rules, best practices, and what tools your team can use and has been approved with the hope of ensuring that all the points we just mentioned in why AI governance matters are achieved. This is not just limited to a single part of AI its all parts of the systems that it uses or interacts with, all the processes that utilise the system or that interact with it and finally, any guardrails.

How do we implement this framework?

There are several core principles encompassed by AI Governance:

Accountability and Oversight: This is the task of defining who is responsible for the outcomes produced by the AI system and keeping a human in the loop for high risk decisions, something we discussed in previous episodes of this series. This might take the form of a RACI model: Responsible (doer), Accountable (owner/signer), Consulted (feedback provider), and Informed (updated party)

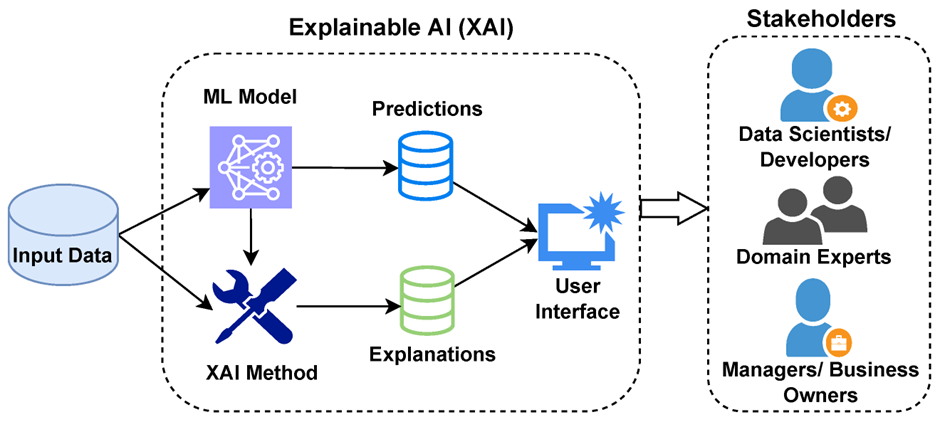

Transparency and Explainability: If you haven’t read the previous episode when we covered this, please go back and check it out LINK

Fairness and Ethics: This is where you actively should be monitoring for any bias in training data and system outcomes to ensure fairness and ensuring your using the system for ethical purposes.

Safety and Security: Ensuring your system is protected from attacks, just like all other systems you implement. This doesn’t change for AI if anything, it is more important. Additionally, ensure it is reliable and consistent with the use and outputs you expect.

The Governance Challenges Organisations Face

Organisations rarely struggle with AI governance because they lack the intent to. They struggle because the realities of delivery, culture, and tooling collide with the plan they laid out in their plan.

The reality is that teams start to adopt tools and build prototypes outside formal processes, creating pockets of risk and making it difficult to maintain a clear view of what models exist, what data they use, and how they behave. Different functions interpret AI, risk, and governance language in their own way. Without shared foundations, policies feel abstract, and controls get applied unevenly. Multiple platforms, cloud services, and GenAI tools enter the organisation through different routes, each bringing its own governance features.

There is a balance to be set with delivery teams wanting speed, risk teams wanting assurance, and leadership wanting both. Without a balanced model, governance becomes either a blocker or a tick

box exercise. So, to achieve this, we need to modernise our approach to monitoring and governance, with new issues like hallucinations, drift, and emergent behaviour going undetected with historic monitoring not built to handle them.

So how do we strike that balance and build out the new responsibilities? Well, one way to look at it is as 3 layers, as shown below. We have the environmental layer, focusing on the area your business works in, and the laws and regulations that you have to follow. Your organisation itself do you have the capabilities, the strategy in place and the goals you want to achieve mapped out? Finally, the AI systems themselves, what does the day to day useage look like? How are you going to develop them, and how are you going to manage them? So, how do we implement this practically?

A Practical Framework for Getting Started

A practical governance framework begins with clarity and a clear plan on the principles and accountability it aims to achieve, giving teams across the business a strong foundation for how AI should be used and who is responsible for the decisions. Once that’s in place, organisations can map their current and future plans for AI, including the potential use cases, assess the risks that could occur for each, and translate them all into workable standards that everyone can understand for day to day usage.

The real shift happens when this framework and these guidelines are backed up by the right technical controls, including monitoring, evaluation metrics and access management. With teams being enabled through AI upskilling and change management support that helps people understand not just what to do but why it matters. Governance then becomes an iterative process start small, embed what works, and mature the framework as adoption grows. I have included a few resources below to help get you started on your how framework.

Where this is being used

In regulated industries, a phased approach allows teams to deploy GenAI safely by combining early risk mapping with strict data handling rules and human oversight. When users start to make their own AI tools within organisations main regain control by introducing a central registry and a lightweight approval flow, giving visibility without slowing teams down. Product teams can even use governance to accelerate delivery when automated evaluation pipelines and clear guardrails remove ambiguity and reduce rework. These stories show that governance isn’t a tick box exercise, it’s a way to create confidence and consistency.

The Future of AI Governance

Where I believe AI Governance is going in the future is more towards more adaptive, continuous models. Agentic systems and autonomous workflows will demand stronger oversight and clearer escalation paths. If you haven’t read the last blog, go back and check it out. Continuous evaluation and dynamic risk scoring will replace static assessments, giving organisations a near real time view of what their models are doing and how they are performing. Governance will also converge with broader digital controls, creating unified frameworks across data, security, and software. Most importantly, the focus will shift from compliance to capability uplift, helping teams use AI safely and effectively rather than simply avoiding risk.

Effective AI governance is an enabler of innovation, not a constraint. Organisations that invest in shared literacy, aligned responsibilities, and simple, scalable processes will move faster and with greater confidence than those relying on ad-hoc controls. The key is to begin with small, meaningful steps and let the framework grow alongside your AI ambition.

I hope you have enjoyed this series, and feel free to reach out if you’d like to know more or have any questions.

Comments